I spent a good part of a morning this week starting to audit writing in a junior school. The school is in particularly challenging circumstances and there has been a very rapid staff turn over; year 6 class are on their third teacher this year.

We discussed what the best assessment tool was for this task; the school was happy to trial the CLPE scales – they liked the fact that they are based on research, supported by the various subject associations and provide suggested next steps which will support teachers with their planning. We were also keen to trial the KS2 criteria for the expected standard. Thirdly, partly because, contrary to what was said, we are all very familiar with them, but also to provide a clear comparison, we also decided to use the old National Curriculum levels.

It did take quite a long time. The new descriptors took the longest to apply. Because I don’t know the children I could not use, as the webinar presenters suggested, my knowledge of them over time and I concede, that would have helped me to be speedier. I did, though, find it much quicker to absorb and apply the CLPE descriptors. The old National Curriculum levels, obviously, were the easiest, simply because they are the most familiar.

What was a bit worrying were the outcomes. These were the first pupils out of the box.

We discussed what the best assessment tool was for this task; the school was happy to trial the CLPE scales – they liked the fact that they are based on research, supported by the various subject associations and provide suggested next steps which will support teachers with their planning. We were also keen to trial the KS2 criteria for the expected standard. Thirdly, partly because, contrary to what was said, we are all very familiar with them, but also to provide a clear comparison, we also decided to use the old National Curriculum levels.

It did take quite a long time. The new descriptors took the longest to apply. Because I don’t know the children I could not use, as the webinar presenters suggested, my knowledge of them over time and I concede, that would have helped me to be speedier. I did, though, find it much quicker to absorb and apply the CLPE descriptors. The old National Curriculum levels, obviously, were the easiest, simply because they are the most familiar.

What was a bit worrying were the outcomes. These were the first pupils out of the box.

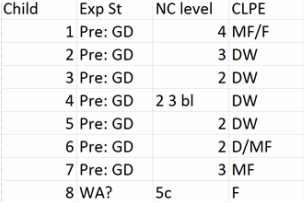

As you can see, the old levels showed a range of achievement from level 2 to level 5 and this is reflected in the assessments against the CLPE criteria. The judgements based on the Interim Criteria were a different story. In many cases, it was the failure to meet just one of the statements which held children off the Working Towards standard. It seemed horrendously harsh to judge them on the Pre KS2 standards – there’s such a huge gulf between them and working towards.

I was also very conscious of the arbitrariness of the criteria – children are required to use a variety of verb forms, to use modals and passives, but there is no way to distinguish between those who are managing subject-verb agreement and those who don’t. Similarly, there is no distinction between those who merely group ideas roughly into paragraphs, and those who can structure paragraphs effectively and sequence them logically.

Ultimately, because the criteria concern themselves only with the grammatical and orthographical features of writing, there is no consideration of the writing’s communicative impact. Surely, this is what any assessment of writing should be primarily concerned with, with the means used to achieve the impact being important, but secondary.

During Friday’s webinar, the speakers discussed the reason for using the ‘secure fit’ – or as I prefer to think of it, the ‘cliff edge’ – model rather than the best fit. The argument was, that in previous years, receiving struggled with the fact that a child could achieve a level in a number of different ways, and didn’t know what gaps there might be on the knowledge or skills of a child who had been given a particular level.

When I was teaching in a secondary school, I don’t think either l or any of my colleagues struggled with this. We were using the same levels and understood the range of performance they represented.

Now that there is no longer a unified system of levels, I wonder how many secondary English teachers, grappling as they are with new GCSEs, will have had time to understand these Interim Criteria.

As a receiving teacher, being told that a child is Working Towards, only tells me that there’s something a child can’t do. It doesn’t tell me what that is, and it might just be that the child has never used a semicolon. It doesn’t tell me whether the child can communicate ideas clearly and effectively or not, whether they can structure and sequence their ideas.

If the assessments I made this week were passed up to a secondary school (they won’t be, the children will make plenty of ground between now and June), I would have no idea that the children who had been assessed on the Pre KS2 criteria could do so much more than meet those criteria, and that would undoubtedly affect my expectations of them.

The potential for this assessment process damaging young writers’ self esteem has been discussed at length in many blogs, as has the confusion parents are likely to experience when trying to understand their child’s results. I have written at length about the workload implications as have others.

If we are to take all these risks, then the results we get from these assessments need to be worth it - they need to represent a picture of what a child can do in writing and differentiate between children with different levels of achievement. The sad fact is that these criteria don’t. They are simply not fit for purpose.

I was also very conscious of the arbitrariness of the criteria – children are required to use a variety of verb forms, to use modals and passives, but there is no way to distinguish between those who are managing subject-verb agreement and those who don’t. Similarly, there is no distinction between those who merely group ideas roughly into paragraphs, and those who can structure paragraphs effectively and sequence them logically.

Ultimately, because the criteria concern themselves only with the grammatical and orthographical features of writing, there is no consideration of the writing’s communicative impact. Surely, this is what any assessment of writing should be primarily concerned with, with the means used to achieve the impact being important, but secondary.

During Friday’s webinar, the speakers discussed the reason for using the ‘secure fit’ – or as I prefer to think of it, the ‘cliff edge’ – model rather than the best fit. The argument was, that in previous years, receiving struggled with the fact that a child could achieve a level in a number of different ways, and didn’t know what gaps there might be on the knowledge or skills of a child who had been given a particular level.

When I was teaching in a secondary school, I don’t think either l or any of my colleagues struggled with this. We were using the same levels and understood the range of performance they represented.

Now that there is no longer a unified system of levels, I wonder how many secondary English teachers, grappling as they are with new GCSEs, will have had time to understand these Interim Criteria.

As a receiving teacher, being told that a child is Working Towards, only tells me that there’s something a child can’t do. It doesn’t tell me what that is, and it might just be that the child has never used a semicolon. It doesn’t tell me whether the child can communicate ideas clearly and effectively or not, whether they can structure and sequence their ideas.

If the assessments I made this week were passed up to a secondary school (they won’t be, the children will make plenty of ground between now and June), I would have no idea that the children who had been assessed on the Pre KS2 criteria could do so much more than meet those criteria, and that would undoubtedly affect my expectations of them.

The potential for this assessment process damaging young writers’ self esteem has been discussed at length in many blogs, as has the confusion parents are likely to experience when trying to understand their child’s results. I have written at length about the workload implications as have others.

If we are to take all these risks, then the results we get from these assessments need to be worth it - they need to represent a picture of what a child can do in writing and differentiate between children with different levels of achievement. The sad fact is that these criteria don’t. They are simply not fit for purpose.

RSS Feed

RSS Feed